Dell Technologies and VMware are happy to announce the availability of VMware Cloud Foundation 4.1.0 on VxRail 7.0.100.

This release brings support for the latest versions of VMware Cloud Foundation and Dell EMC VxRail to the Dell Technologies Cloud Platform and provides a simple and consistent operational experience for developer ready infrastructure across core, edge, and cloud. Let’s review these new features.

Updated VMware Cloud Foundation and VxRail BOM

Cloud Foundation 4.1 on VxRail 7.0.100 introduces support for the latest versions of the SDDC listed below:

- vSphere 7.0 U1

- vSAN 7.0 U1

- NSX-T 3.0 P02

- vRealize Suite Lifecycle Manager 8.1 P01

- vRealize Automation 8.1 P02

- vRealize Log Insight 8.1.1

- vRealize Operations Manager 8.1.1

- VxRail 7.0.100

For the complete list of component versions in the release, please refer to the VCF on VxRail release notes. A link is available at the end of this post.

VMware Cloud Foundation Software Feature Updates

VCF on VxRail Management Enhancements

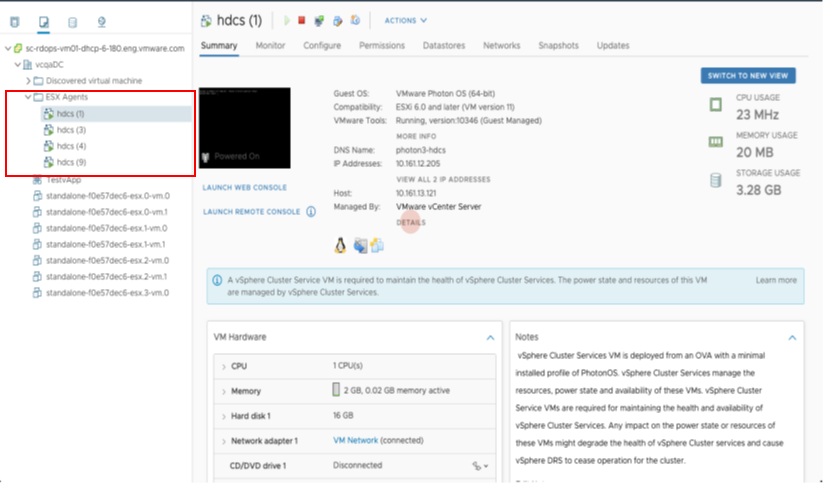

vSphere Cluster Level Services (vCLS)

vSphere Cluster Services is a new capability introduced in the vSphere 7 Update 1 release that is included as a part of VCF 4.1. It runs as a set of virtual machines deployed on top of every vSphere cluster. Its initial functionality provides foundational capabilities that are needed to create a decoupled and distributed control plane for clustering services in vSphere. vCLS ensures cluster services like vSphere DRS and vSphere HA are all available to maintain the resources and health of the workloads running in the clusters independent of the availability of vCenter Server. The figure below shows the components that make up vCLS from the vSphere Web Client.

Figure 1

Not only is vSphere 7 providing modernized data services like embedded vSphere Native Pods with vSphere with Tanzu but features like vCLS are now beginning the evolution of modernizing to distributed control planes too!

VCF Managed Resources and VxRail Cluster Object Renaming Support

VCF can now rename resource objects post creation, including the ability to rename domains, datacenters, and VxRail clusters.

The domain is managed by the SDDC Manager. As a result, you will find that there are additional options within the SDDC Manager UI that will allow you to rename these objects.

VxRail Cluster objects are managed by a given vCenter server instance. In order to change cluster names, you will need to change the name within vCenter Server. Once you do, you can go back to the SDDC Manager and after a refresh of the UI, the new cluster name will be retrieved by the SDDC Manager and shown.

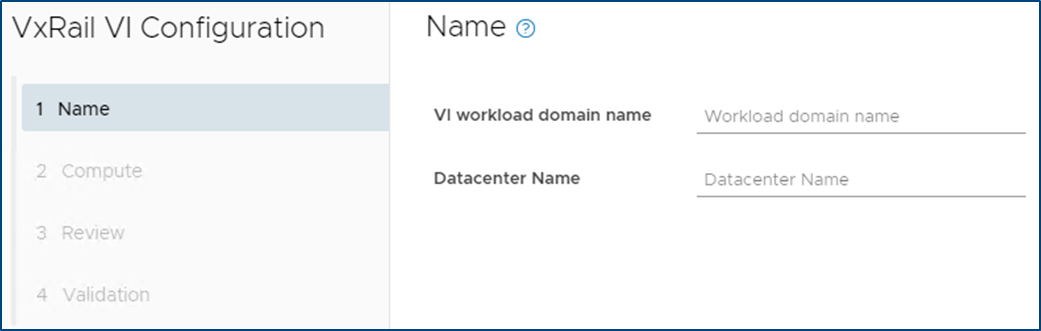

In addition to the domain and VxRail cluster object rename, SDDC Manager now supports the use of a customized Datacenter object name. The enhanced VxRail VI WLD creation wizard process has been updated to include inputs for Datacenter Name and is automatically imported into the SDDC Manager inventory during the VxRail VI WLD Creation SDDC Manager workflow. Note: Make sure the Datacenter name matches the one used during the VxRail Cluster First Run. The figure below shows the Datacenter Input step in the enhanced VxRail VI WLD creation wizard from within SDDC Manager.

Figure 2

Being able to customize resource object names makes VCF on VxRail more flexible in aligning with an IT organization’s naming policies.

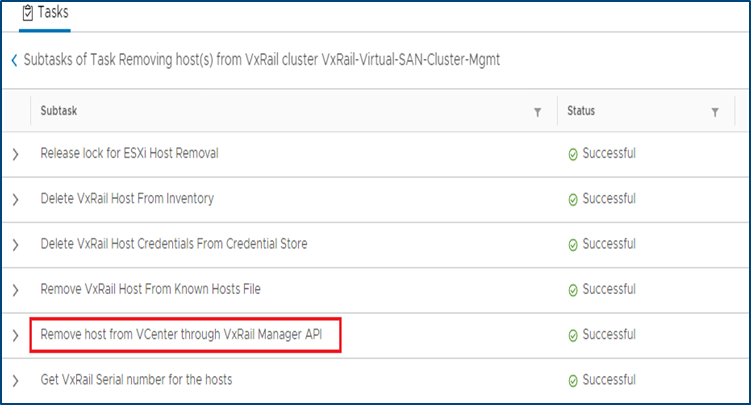

VxRail Integrated SDDC Manager WLD Cluster Node Removal Workflow Optimization

Furthering the Dell Technologies and VMware co-engineering integration efforts for VCF on VxRail, new workflow optimizations have been introduced in VCF 4.1 that take advantage of VxRail Manager APIs for VxRail cluster host removal operations.

When the time comes for VCF on VxRail cloud administrators to remove hosts from WLD clusters and repurpose them for other domains, admins will use the SDDC Manager “Remove Host from WLD Cluster” workflow to perform this task. This remove host operation has now been fully integrated with native VxRail Manager APIs to automate removing physical VxRail hosts from a VxRail cluster as a single end-to-end automated workflow that is kicked off from the SDDC Manager UI or VCF API. This integration further simplifies and streamlines VxRail infrastructure management operations all from within common VMware SDDC management tools. The figure below illustrates the SDDC Manager sub tasks that include new VxRail API calls used by SDDC Manager as a part of the workflow.

Figure 3

Note: Removed VxRail nodes require reimaging prior to repurposing them into other domains. This reimaging currently requires Dell EMC support to perform.

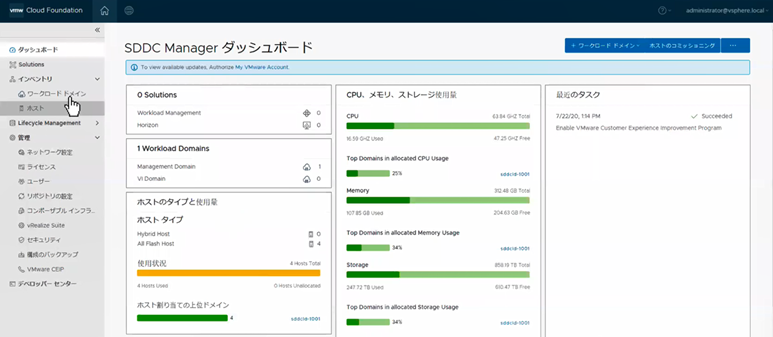

I18N Internationalization and Localization (SDDC Manager)

SDDC Manager now has international language support that meets the I18N Internationalization and Localization standard. Options to select the desired language are available in the Cloud Builder UI, which installs SDDC Manager using the selected language settings. SDDC Manager will have localization support for the following languages – German, Japanese, Chinese, French, and Spanish. The figure below illustrates an example of what this would look like in the SDDC Manager UI.

Figure 4

vRealize Suite Enhancements

VCF Aware vRSLCM

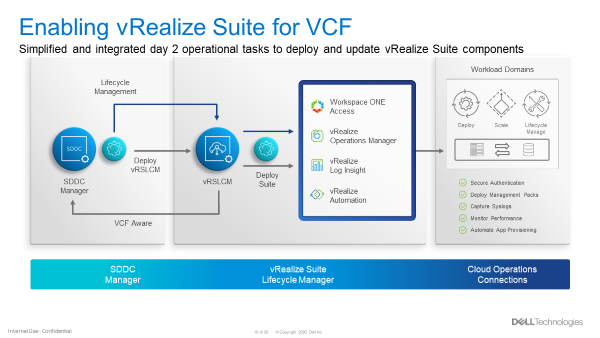

New in VCF 4.1, the vRealize Suite is fully integrated into VCF. The SDDC Manager deploys the vRSLCM and creates a two way communication channel between the two components. When deployed, vRSLCM is now VCF aware and reports back to the SDDC Manager what vRealize products are installed. The installation of vRealize Suite components utilizes built standardized VVD best practices deployment designs leveraging Application Virtual Networks (AVNs).

Software Bundles for the vRealize Suite are all downloaded and managed through the SDDC Manager. When patches or updates become available for the vRealize Suite, lifecycle management of the vRealize Suite components is controlled from the SDDC Manager, calling on vRSLCM to execute the updates as part of SDDC Manager LCM workflows. The figure below showcases the process for enabling vRealize Suite for VCF.

Figure 5

VCF Multi-Site Architecture Enhancements

VCF Remote Cluster Support

VCF Remote Cluster Support enables customers to extend their VCF on VxRail operational capabilities to ROBO and Cloud Edge sites, enabling consistent operations from core to edge. Pair this with an awesome selection of VxRail hardware platform options and Dell Technologies has your Edge use cases covered. More on hardware platforms later…For a great detailed explanation on this exciting new feature check out the link to a detailed VMware blog post on the topic at the end of this post.

VCF LCM Enhancements

NSX-T Edge and Host Cluster-Level and Parallel Upgrades

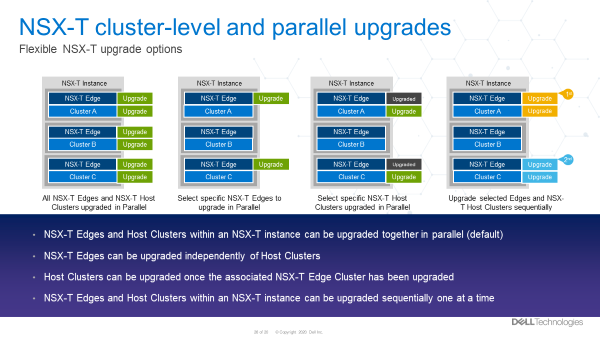

With previous VCF on VxRail releases, NSX-T upgrades were all encompassing, meaning that a single update required updates to all the transport hosts as well as the NSX Edge and Manager components in one evolution.

With VCF 4.1, support has been added to perform staggered NSX updates to help minimize maintenance windows. Now, an NSX upgrade can consist of three distinct parts:

- Updating of edges

- Can be one job or multiple jobs. Rerun the wizard.

- Must be done before moving to the hosts

- Updating the transport hosts

- Once the hosts within the clusters have been updated, the NSX Managers can be updated.

Multiple NSX edge and/or host transport clusters within the NSX-T instance can be upgraded in parallel. The Administrator has the option to choose some clusters without having to choose all of them. Clusters within a NSX-T fabric can also be chosen to be upgraded sequentially, one at a time. Below are some examples of how NSX-T components can be updated.

NSX-T Components can be updated in several ways. These include updating:

- NSX-T Edges and Host Clusters within an NSX-T instance can be upgraded together in parallel (default)

- NSX-T Edges can be upgraded independently of NSX-T Host Clusters

- NSX-T Host Clusters can be upgraded independently of NSX-T Edges only after the Edges are upgraded first

- NSX-T Edges and Host Clusters within an NSX-T instance can be upgraded sequentially one after another.

The figure below visually depicts these options.

Figure 6

These options provide Cloud admins with a ton of flexibility so they can properly plan and execute NSX-T LCM updates within their respective maintenance windows. More flexible and simpler operations. Nice!

VCF Security Enhancements

Read-Only Access Role, Local and Service Accounts

A new ‘view-only’ role has been added to VCF 4.1. For some context, let’s talk a bit now about what happens when logging into the SDDC Manager.

First, you will provide a username and password. This information gets sent to the SDDC Manager, who then sends it to the SSO domain for verification. Once verified, the SDDC Manager can see what role the account has privilege for.

In previous versions of Cloud Foundation, the role would either be for an Administrator or it would be for an Operator.

Now, there is a third role available called a ‘Viewer’. Like its name suggests, this is a view only role which has no ability to create, delete, or modify objects. Users who are assigned this role may not see certain items in the SDDC Manger UI, such as the User screen. They may also see a message saying they are unauthorized to perform certain actions.

Also new, VCF now has a local account that can be used during an SSO failure. To help understand why this is needed let’s consider this: What happens when the SSO domain is unavailable for some reason? In this case, the user would not be able to login. To address this, administrators now can configure a VCF local account called admin@local. This account will allow the performing of certain actions until the SSO domain is functional again. This VCF local account is defined in the deployment worksheet and used in the VCF bring up process. If bring up has already been completed and the local account was not configured, then a warning banner will be displayed on the SDDC Manager UI until the local account is configured.

Lastly, SDDC Manager now uses new service accounts to streamline communications between SDDC manager and the products within Cloud Foundation. These new service accounts follow VVD guidelines for pre-defined usernames and are administered through the admin user account to improve inter-VCF communications within SDDC Manager.

VCF Data Protection Enhancements

As described in this blog, with VCF 4.1, SDDC Manager backup-recovery workflows and APIs have been improved to add capabilities such as backup management, backup scheduling, retention policy, on-demand backup & auto retries on failure. The improvement also includes Public APIs for 3rd party ecosystem and certified backup solutions from Dell PowerProtect.

VxRail Software Feature Updates

VxRail Networking Enhancements

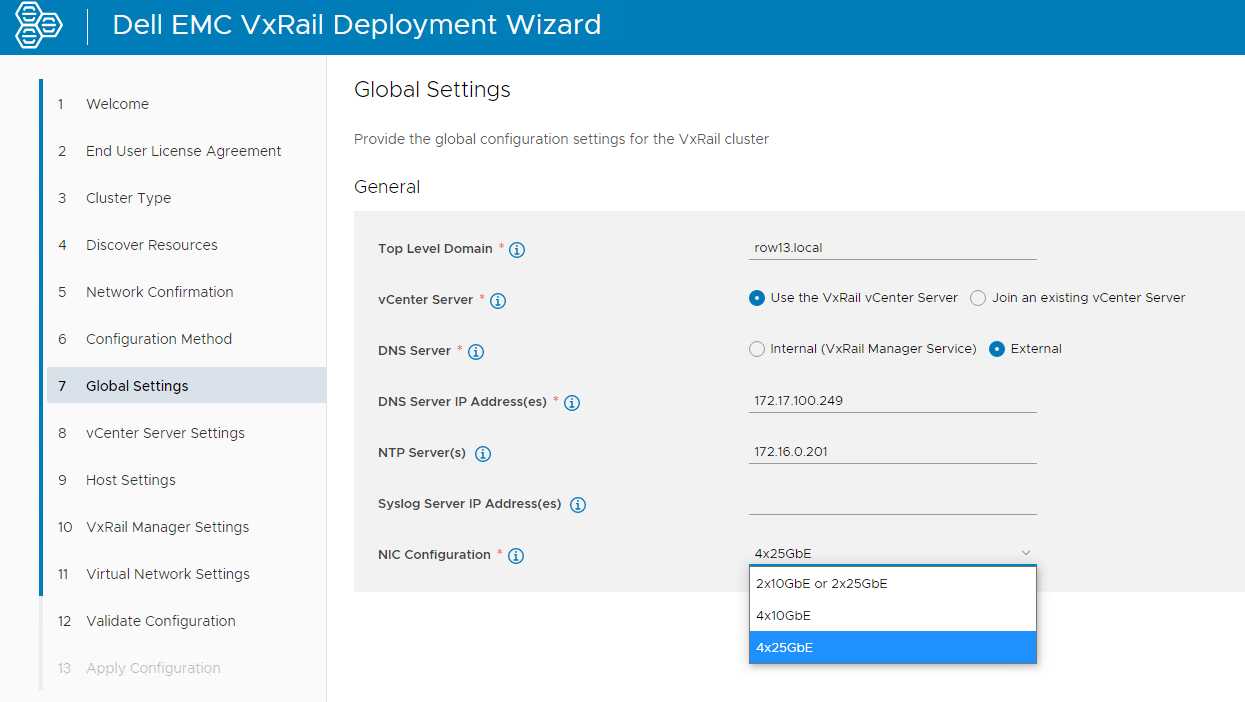

VxRail 4 x 25Gbps pNIC redundancy

VxRail engineering continues innovate in areas that drive more value to customers. The latest VCF on VxRail release follows through on delivering just that for our customers. New in this release, customers can use the automated VxRail First Run Process to deploy VCF on VxRail nodes using 4 x 25Gbps physical port configurations to run the VxRail System vDS for system traffic like Management, vSAN, and vMotion, etc. The physical port configuration of the VxRail nodes would include 2 x 25Gbps NDC ports and additional 2 x 25Gbps PCIe NIC ports.

In this 4 x 25Gbps set up, NSX-T traffic would run on the same System vDS. But what is great here (and where the flexibility comes in) is that customers can also choose to separate NSX-T traffic on its own NSX-T vDS that uplinks to separate physical PCIe NIC ports by using SDDC Manager APIs. This ability was first introduced in the last release and can also be leveraged here to expand the flexibility of VxRail host network configurations.

The figure below illustrates the option to select the base 4 x 25Gbps port configuration during VxRail First Run.

Figure 7

By allowing customers to run the VxRail System VDS across the NDC NIC ports and PCIe NIC ports, customers gain an extra layer of physical NIC redundancy and high availability. This has already been supported with 10Gbps based VxRail nodes. This release now brings the same high availability option to 25Gbps based VxRail nodes. Extra network high availability AND 25Gbps performance!? Sign me up!

VxRail Hardware Platform Updates

Recently introduced support for ruggedized D-Series VxRail hardware platforms (D560/D560F) continue expanding the available VxRail hardware platforms supported in the Dell Technologies Cloud Platform.

These ruggedized and durable platforms are designed to meet the demand for more compute, performance, storage, and more importantly, operational simplicity that deliver the full power of VxRail for workloads at the edge, in challenging environments, or for space-constrained areas.

These D-Series systems are a perfect match when paired with the latest VCF Remote Cluster features introduced in Cloud Foundation 4.1.0 to enable Cloud Foundation with Tanzu on VxRail to reach these space-constrained and challenging ROBO/Edge sites to run cloud native and traditional workloads, extending existing VCF on VxRail operations to these locations! Cool right?!

To read more about the technical details of VxRail D-Series, check out the VxRail D-Series Spec Sheet.

Well that about covers it all for this release. The innovation train continues. Until next time, feel free to check out the links below to learn more about DTCP (VCF on VxRail).